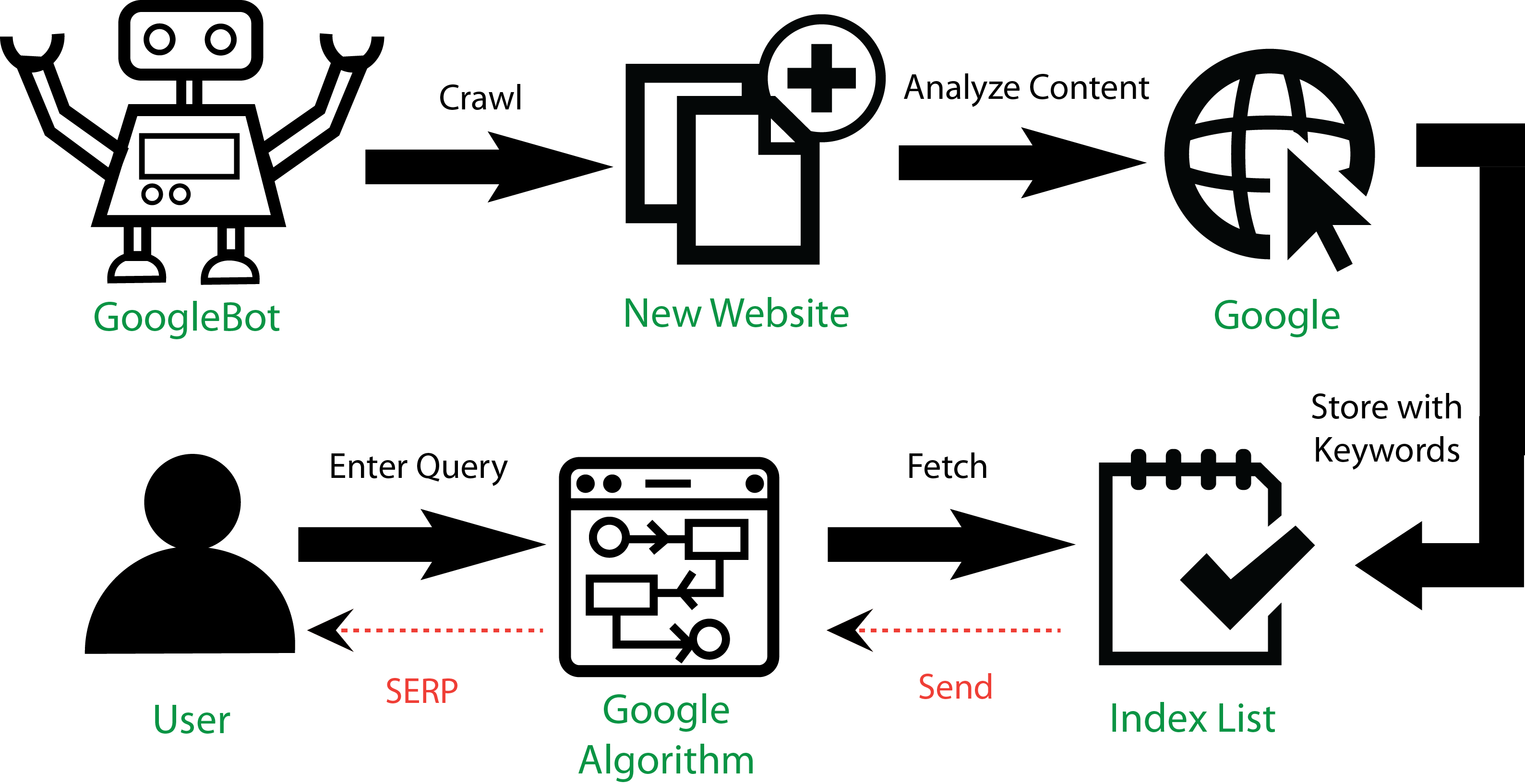

Once you write and publish a post on the website, it takes three steps to convert.

- Crawling

- Indexing

- Ranking

Everyone knows what google ranking is and what it does for the website. But, do you know what is crawling and indexing of a website and how that happened?

Let we see it!

Crawling and Indexing

Crawling and Indexing are two processes that are periodically performed by search engines like Google.

Crawling is the first phase where Google uses its software programs like Googlebot, spider, and crawler to update the unnoticed pages, and newly added information from existing and new websites.

Google uses this data to study the website and what its pages are about to say. Later, allots particular keywords and tags to the pages it crawled. These pages will then stored in a list called index, which means the new website or new data from the existing page have updated to Google’s database.

When the users search on Google using the queries or keywords, Google scans the index list and show the most relevant data in the result page.

What if Google does not index the website?

If your website doesn’t index by the search engine, there are no chances it gets traffic and found on SERP. Ranking a website is not possible without passing this phase.

Result, you can’t reach your marketing goals.

Generally, a new website or a webpage can take a couple of days, a week, or even a month to index. Technically, there is no definite time for how long Google takes to index a new website.

Now, how can you get Google index your website? There are two choices.

Either you can Wait for Google to index your website. But there is no time-bound for this.

Or you can Make it happen by taking a few actions and following steps.

This article focuses on How to Get Google to Index New Website and Webpage.

What are the indexing factors?

Try to get the increasing index rate, which means the search engine will crawl the new content as quickly as you publish.

Index rate = No of pages indexed by Google / No of pages on your website.

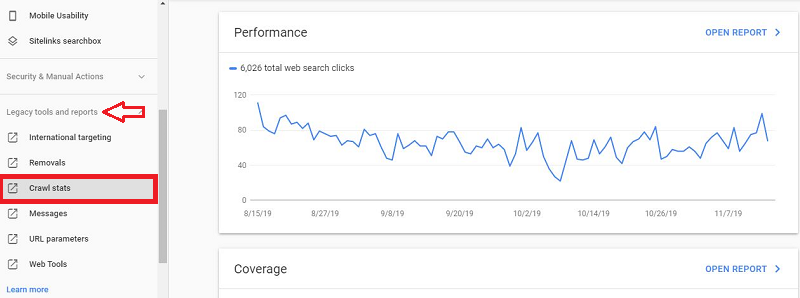

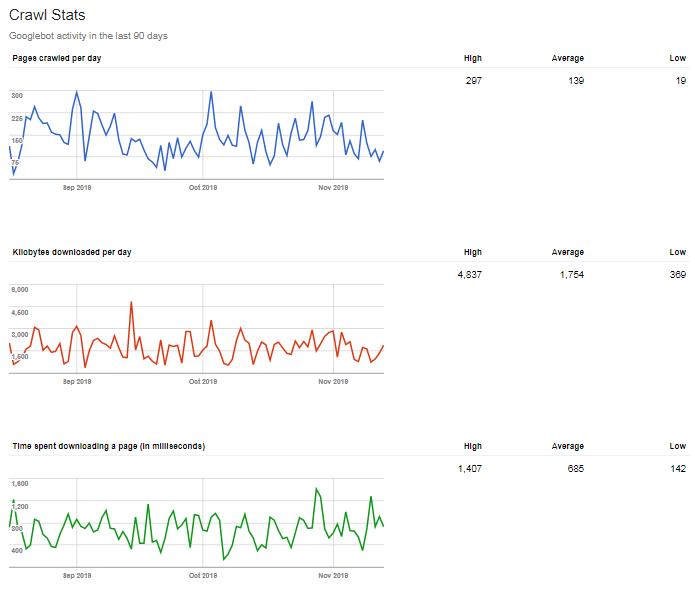

Use the Google Search Console to know how often Googlebot is crawling your webpages.

Login to Google Search Console -> Legacy Tools & Reports -> Crawl Stats.

You can see three different graphs such as Blue, Green, and Orange.

The Blue graph shows how often Google crawled that site pages. We can witness that the graph heading towards up, which means the index rate is increasing.

So what can you do to increase the index rate?

» Update all the older content and add trending topics related to your niche.

» Move to better hosting that may improve the crawl rate and speed of the website.

The loading time of the page is an essential factor that Google considers while crawling and indexing.

The number of pages indexed by Google is more important than how often it is indexing. If you are using WordPress, using the plugins like Yoast SEO can generate Sitemap, it will update all the new pages regularly. Otherwise, you have to update the sitemap manually.

How to check if my site indexed or not?

Go to Google and search site:yoursite.com. If it is indexed, the result will show like this.

You can see the number of pages indexed on the result page. If it is not indexed, then there will be no results.

Once your site got indexed, you need to know all your pages got indexed or not.

For that purpose, use Google Search Console or GWT (Google Webmasters Tool).

Login to GWT -> Index -> Coverage.

Here you can see the Error, Valid, Excluded, and Valid with Warning pages in your site.

In the same page, there is a table that shows further pages under each specific.

You can use GWT to check if the specific page or post page indexed or not. Just paste the URL on the URL inspection bar and search.

The result of the indexed page will say the URL is on Google.

Otherwise, it will say the URL is not on Google.

How to Get Indexed by Google

1. Use Google Search Console

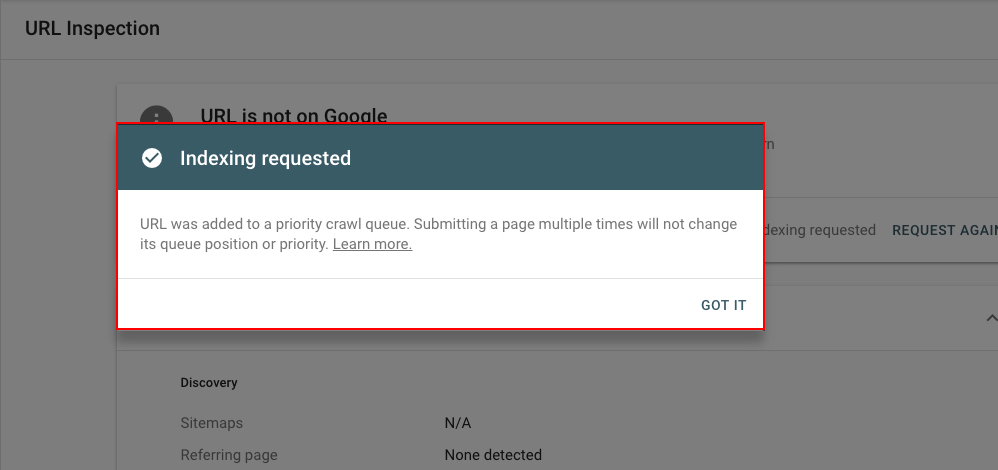

Using GWT for indexing the website or page probably the old method but it is undoubtedly a win-win idea.

While you check if the webpage is indexed or not using the URL inspection tool. It will show an option “Request Indexing” for both indexed and non-indexed URL.

After you request indexing, it will show a pop-up message like in the image below.

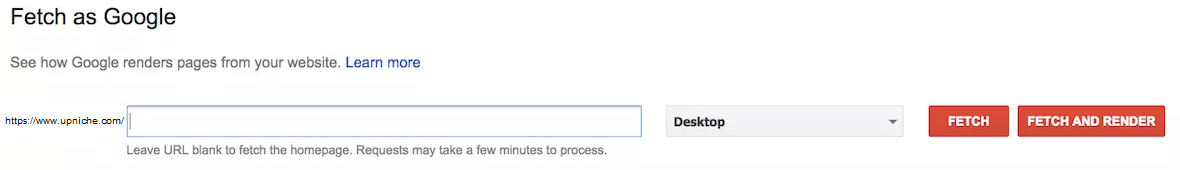

In the previous version of GWT, this option was provided as "Fetch as Google."

Any new page and post can able to index in this way. Remember, you can request only 10 URLs per day, not more than that.

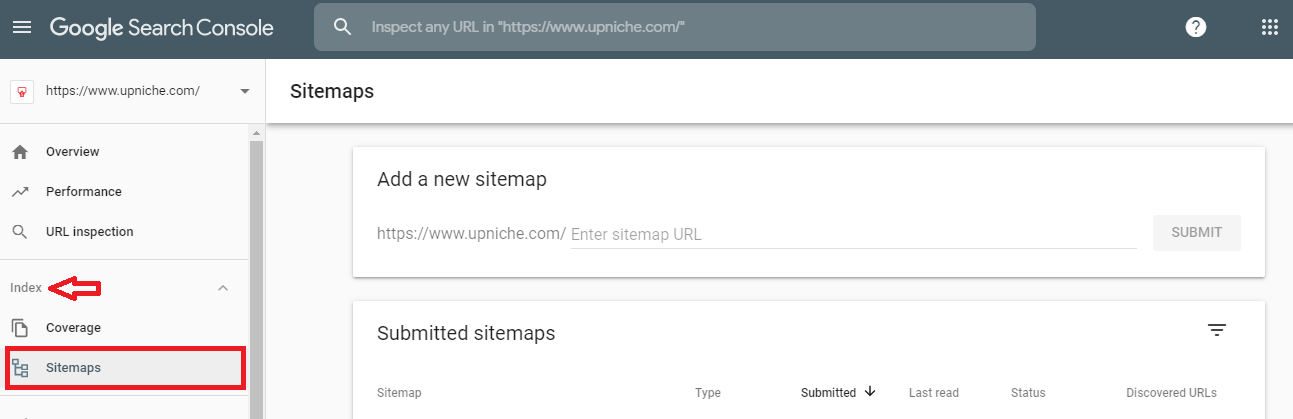

2. Add or update the sitemap

Sitemaps are the XML files that store all the links on the website. It helps as a Navigation guide for Google to know the changes and understand essential pages on the site.

Sitemaps are highly useful if you have a big site with various categories and pages. It reduces the searching time that Googlebot took to look on your webpages.

Creating a sitemap may not assure ranking, but it accelerates faster indexing and avoid failing to update new pages on Google.

There are many sitemap generator tools and plugins available. You can create a sitemap with this and add it to GWT.

GWT -> Index -> Sitemap -> Add a new sitemap.

You can create different sitemaps for posts, pages, images, videos, and other files on the website.

Regularly, check if the sitemap has all the pages that need to be indexed, otherwise add them.

3. Add Internal Links

An internal link is an essential factor in On-page SEO. It is even helping a website to get indexed quickly. Google software programs crawl a site from page to page following the HTML links.

Make sure all the relevant pages on the website interlinked with each other.

Always link the powerful pages to new pages why because, usually, search engine crawl and index high authority pages often when there is a new change. In that way, it will easily find the new pages as they move link to link.

If you don’t know the best pages on your website, use Ahref.com.

Login to Ahrefs -> Enter your domain -> Choose Best pages by a link.

Here, you have the list of top most pages on your website. Add a link on any one of the pages which are relevant to the new page.

That means two pages share the equal value!

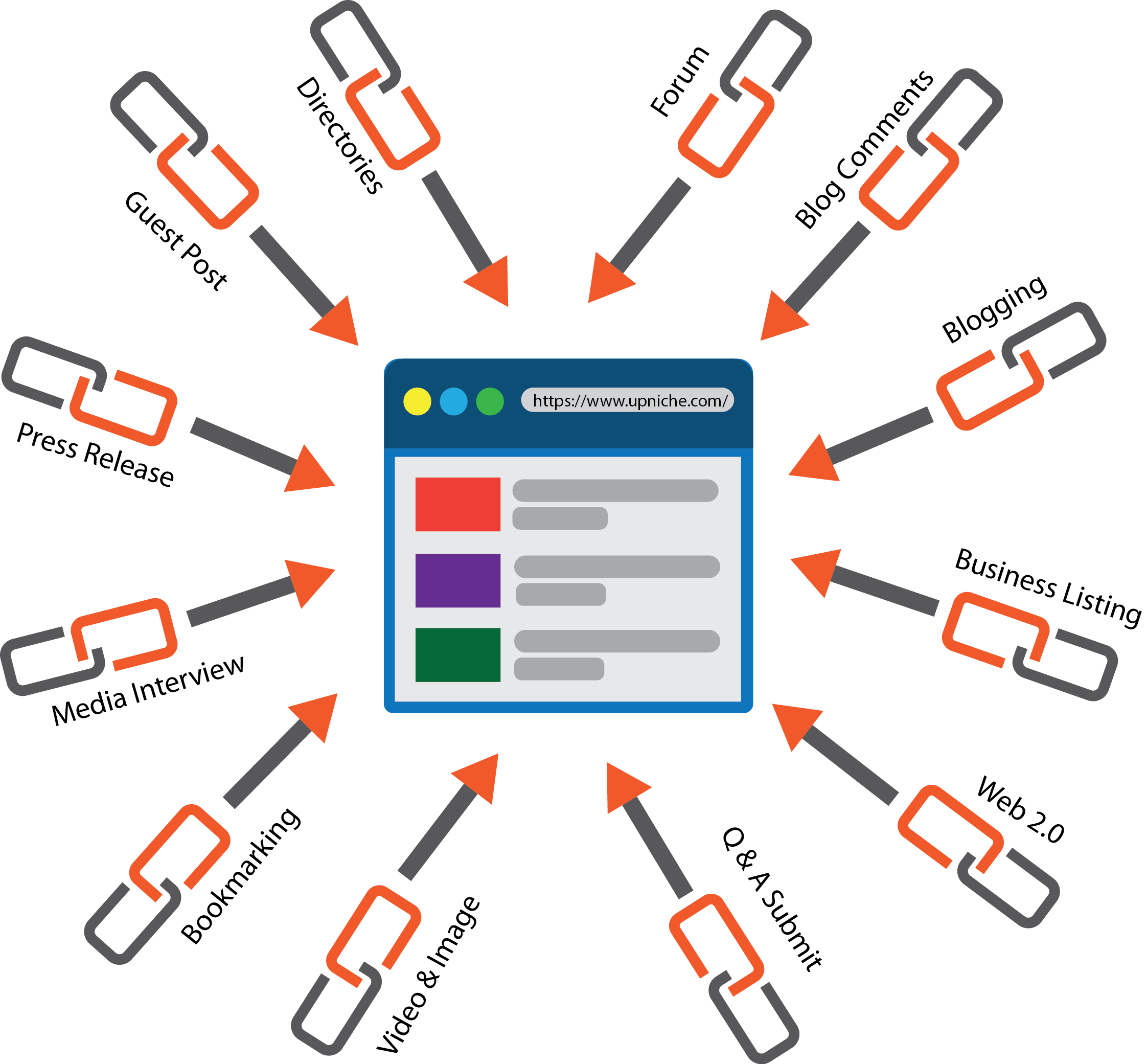

4. Earn External Links

If you already have done SEO, you know the value of external or inbound links.

Inbounds links are the links to your webpage from authority and trustworthy websites.

Having a backlink from highly authorized websites will direct Googlebot to your new webpage and make indexing faster.

Recommended ways to get inbound links:

1. Guest posting on relevant sites

2. Submitting Q&A on sites like Quora, engaging with forums, and commenting on blogs with Dofollow tags.

Make sure the links are natural, relevant, and add value to the customer. Choose popular and authority sites to get backlinks.

5. Get most from Social Media

Sharing a new page or website on social media is always a great idea to got the link indexed and gain viewers.

Social media networks like Facebook, Tweeter, and LinkedIn are some quality platforms. Such links are crawled and indexed by search engines.

Create profiles for websites on these social media platforms if you did not have one yet.

Social engagement from likes, shares, retweets will improve the indexation rate and ranking position. It exposes your website to new people all around the world.

Start to post new content on high traffic sites like Quora, Reddit, etc. These sites have high authority value and traffic from all the search engines. And it is a great medium to connect with the audience from your interest.

6. Publish fresh content with high quality

Content is everything!

If you are building a new website or having a website for digital marketing, then the content is everything here.

You should come with fresh and high-quality content to hold back your viewers, and it also helps to guide Google to re-crawl and return to your website often.

There is no rule to create a content strategy. Build your plan that says about your business, customer needs, and how you’re going to convert.

Go through your strategies often and update it periodically with the current trend.

Try to schedule the posting with time and date. And update the older content and add new content regularly. It will trigger the Google bots to come back to your site.

Google always appreciate the websites that are up to date and keep the trend.

Low-quality content on the website affects the indexation and overall trust of the website. For that, periodically examine and remove the pages on a site that have no value.

» Pages that have value to the audience but not for search engines need to set as NOINDEX.

» Press releases, archive pages on a website created for the audience, but they offer no value to search engines. Disallow these pages from Google crawl using Robots.txt file.

» Old blog posts that have traffic and links but have no value to the audience have to set as 301 redirected.

» Pages that do not fit any of the above three conditions should get deleted.

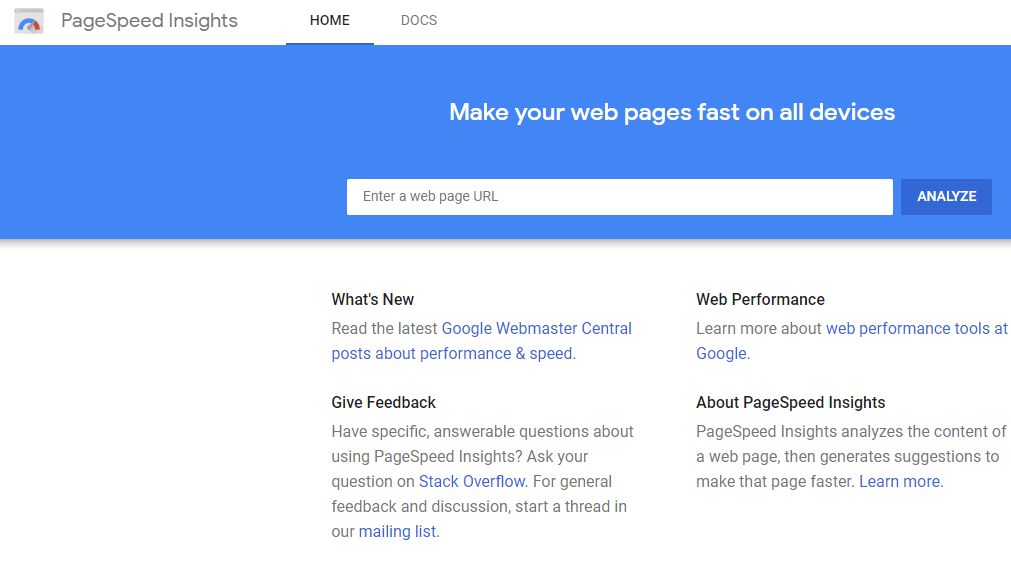

7. Website Speed and Intuitive Navigation

The index rate of faster loading website is always high than those take time to load.

Loading time or we can say the speed of the website, which matters when a search engine crawls and indexes the site.

It is also a crucial factor for the ranking of a website.

Run a speed test regularly to check if the website speed is high. You can check on PageSpeed Insights, which is a free tool from Google.

Proper navigation in a website improves indexing and ranking. It not only guides the audience but also encourages the search engine to re-crawl the website. It positively influences the performance and views of the website too.

Perfect link structure, navigation menu, categories, and related content on the page make your website more understanding and transparent to both audience and search engine.

8. “Ping” ing Your Website

Pinging a website is a process of telling the search engine that there is a new website or new content available. It is like inviting Google to visit your website.

There are various pinging services available. Ping-O-Matic is the most recommended ping service.

Pingler is another free tool for pinging a website.

But remember, you can’t use ping service often to index your website as that will tag your website as spam, and Google will never return to those spam sites. Regularly updating new content is important while using this manual pushing services.